Overcome LEADER_NOT_AVAILABLE error on Multi-Broker Apache Kafka Cluster

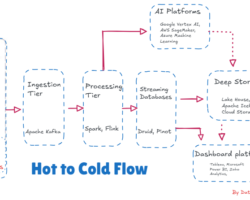

Kafka Connect assumes a significant part in streaming data between Apache Kafka and other data systems. As a tool, it holds the responsibility of a scalable and reliable way to move the data in and out of Apache Kafka. Importing data from the Database set to Apache Kafka is surely perhaps the most well-known use instance of JDBC Connector (Source & Sink) that belongs to Kafka Connect.

This short article aims to elaborate on the steps on how can we resolve the error

“config=LEADER_NOT_AVAILABLE} (org.apache.kafka.clients.NetworkClient:1103)”

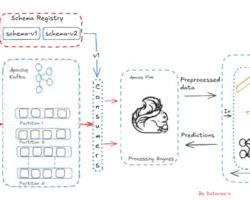

while integrating the Confluent’s JDBC Kafka connector with an operational multi-broker Apache Kafka Cluster for ingesting data from the functioning MySQL Database to Kafka topic. Even though Schema Registry is not mandatory to integrate or run any Kafka Connector like JDBC, File, etc but ideally we cannot use plain JSON, CSV, etc with the JDBC Sink connector. But if we have this kind of data on the source topic, we’ll need to apply a schema to it first and write it to a new topic serialized appropriately.

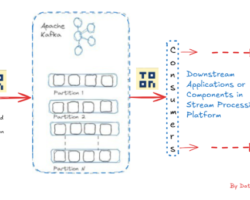

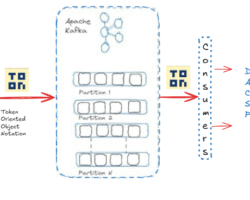

For message producers or consumers on a multi-node Kafka cluster (click here to read how to setup a multi-node Kafka cluster), we pass the broker hostname to get the metadata of other brokers in the cluster which is acting as leaders for the partitions we are trying to write to or read from. The producer or consumer that runs outside the Kafka cluster network, will be able to reach only the brokers that have their public DNS and not private DNS. The private DNS can be used for the producer or consumer if runs on the same network where the Kafka broker is running. Let’s assume a scenario, the producer or consumer is running on a different network or outside the network where the Kafka cluster is running and subsequently trying to establish the connection to the broker that has public DNS to publish or consume messages from the topic. The broker as mentioned above would provide the metadata of other brokers that have private DNS to the producer or consumer. Due to the private DNS of other brokers, the producer or consumer won’t be able to resolve the private DNS, which subsequently leads to the error LEADER_NOT_AVAILABLE. We can update the DNS on the /etc/host file but can’t be considered as real fix. To avoid the error more than a hack, we need to update the server.properties file on the Kafka instances for the following properties. The advertised.listeners is the important property that will help to solve the issue.

- listeners – comma separated hostnames with ports on which Kafka brokers listen to

- listeners – comma separated hostnames with ports which will be passed back to the clients. Make sure to include only the hostnames which will be resolved at the client (producer or consumer) side for eg. public DNS.

- security.protocol.map – includes the supported protocols for each listener

- broker.listener.name – listeners to be used for internal traffic between brokers.

For example

listeners=LISTENERS_PUBLIC://172.16.1.180:9092

advertised.listeners=LISTENERS_PUBLIC://172.16.1.180:9092

listener.security.protocol.map=LISTENERS_PUBLIC:PLAINTEXT,SSL:SSL,SASL_PLAINTEXT:SASL_PLAINTEXT,SASL_SSL:SASL_SSL

inter.broker.listener.name=LISTENERS_PUBLIC

Hope you have enjoyed this read. Please like and share if you feel this composition is valuable.

Can be reached for real-time POC development and hands-on technical training at [email protected]. Besides, to design, develop just as help in any Hadoop/Big Data handling related task, Apache Kafka, Streaming Data etc. Gautam is a advisor and furthermore an Educator as well. Before that, he filled in as Sr. Technical Architect in different technologies and business space across numerous nations.

He is energetic about sharing information through blogs, preparing workshops on different Big Data related innovations, systems and related technologies.