Handling bad messages via DLQ by configuring JDBC Kafka Sink Connector

Any trustworthy data streaming pipeline needs to be able to identify and handle faults. Exceptionally while IoT devices ingest endlessly critical data/events into permanent persistence storage like RDBMS for future […]

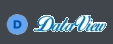

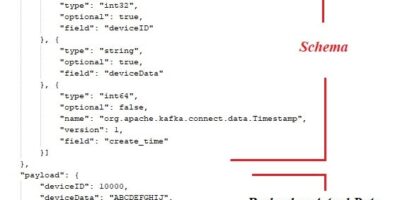

Streaming Data to RDBMS via Kafka JDBC Sink Connector without leveraging Schema Registry

In today’s M2M (Machine to machine) communications landscape, there is a huge requirement for streaming the digital data from heterogeneous IoT devices to the various RDBMS for further analysis via […]

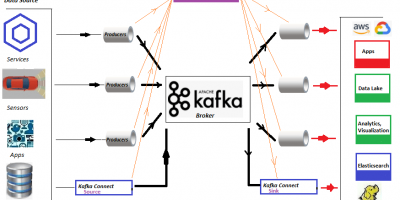

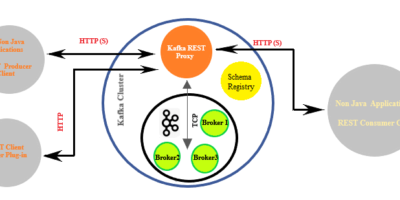

Confluent’s Kafka REST Proxy, The Silk Route for data movement to operational Kafka cluster

Aside from holding the publish-subscribe messaging system functionality, Apache Kafka is gaining outstanding momentum as a distributed event streaming platform. It is being leveraged for high-performance data pipelines, streaming analytics, […]

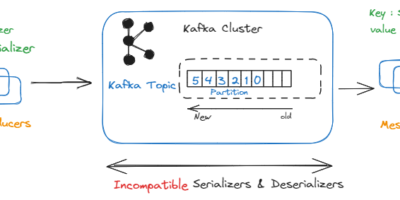

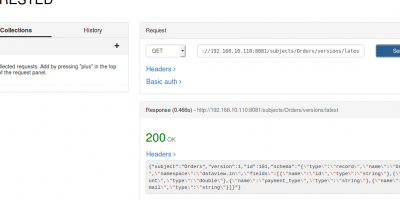

Coupling Schema Registry (Confluent) with Multi-Broker Apache Kafka Cluster

This article aims to explain the steps to coupling Confluent Schema Registry with existed/operational multi-broker Apache Kafka cluster(Local deployment). The Confluent is an integrated platform bundle with Apache Kafka and […]